Cold Email: Auto-Fill Personalized {{Custom_Variables}} with AI

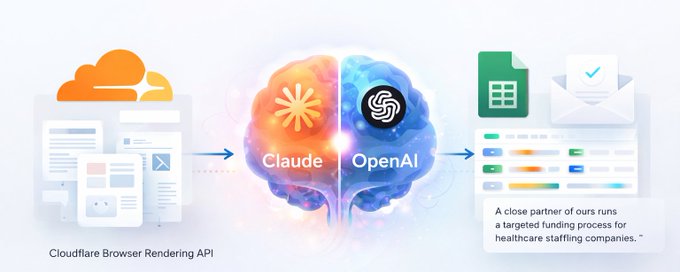

How to use Claude to scrape company websites and auto-fill personalized variables in cold email campaigns — so every email reads like it was written specifically for that prospect's company.

If you've ever tried to scale cold email personalization beyond swapping in a first name, you know the problem: real personalization requires research, and research doesn't scale. This article walks through a repeatable system where Claude does the research for you — scraping company websites, classifying what the business actually does, and filling in custom variables across thousands of contacts before a single email is sent.

By the end you'll understand the full architecture: how to write copy with intentional holes in it, how those holes get filled using live website data, and how that personalized data flows into your sending platform so every email reads like it was written specifically for that person's company.

The Core Idea

Instead of writing one email that says "we help businesses like yours," you write copy with a placeholder like {{sector_relevantfundingtype}} — and that placeholder gets resolved, per row, to something like "automotive dealerships" or "commercial real estate services" or "healthcare staffing" depending on what the company actually does.

The result is an email that reads: "A close partner of ours runs a targeted funding process for healthcare staffing companies." Instead of: "A close partner of ours runs a targeted funding process for businesses like yours."

The difference is significant. One sounds like mass mail. The other sounds like someone did homework. Claude handles the homework at scale.

What You'll Need

1. Claude (via Claude.ai or Claude Code)

Claude is the AI engine doing the heavy lifting — writing your copy with variables, generating the classification prompts, drafting the Python scripts that scrape and fill, and QA-ing the output. You do not need to write code yourself. To get started: claude.ai for chat-based use, or install Claude Code via the terminal for a more persistent, project-aware agent experience.

2. Google Sheets

This is your live working surface. Every contact row lives here. Every enriched column gets written back here. Nothing important lives in a local CSV — the Sheet is the source of truth. Any Google account gives you Sheets access at sheets.google.com.

3. Cloudflare Browser Rendering API

This is how you scrape websites that use JavaScript to render their content — which is most modern company sites. Without this, a plain HTTP request to a company homepage often returns a skeleton with no useful text. Cloudflare's browser rendering renders the page the way a real browser would, then returns clean markdown. Sign up at cloudflare.com, enable the Browser Rendering API under Workers & Pages, and grab your API token and account ID from the dashboard.

4. OpenAI API (GPT-4.1-mini or 4.1-nano)

After scraping raw page text, you need a model to read that text and distill it into a clean noun phrase — "automotive dealerships," not a vague description. GPT-4.1-mini/4.1-nano is fast and cheap for classification tasks like this. Sign up at platform.openai.com, create an API key, and add funds to your account.

5. Instantly.ai (or any sending tool with an API)

This is the cold email sending platform. It accepts custom variables per lead and substitutes them at send time inside your copy. Your scraped and classified values get imported as columns that Instantly maps to {{variable_name}} tokens in your sequence copy. Sign up at instantly.ai and connect your sending domains and mailboxes.

6. Python (3.9+)

Claude will write Python scripts for you. You'll run them locally or in a terminal environment. You don't need to be a developer — you just need Python installed and a basic ability to run a script. Download from python.org. On Mac, it's often already installed. Run python3 --version in your terminal to check.

Step 1: Write Copy With Intentional Holes

Before any scraping happens, you write your email copy — but you leave certain sentences incomplete by design. Instead of filling in a specific description of what a company does, you place a variable token in that slot.

Tell Claude something like:

"I'm writing a cold email for [your offer/industry]. I want the email to reference

what type of business the recipient is in, using a variable called

{{sector_relevantfundingtype}}. Write a 4–6 sentence email where this variable

appears naturally in the body, making a sentence about how our service is relevant

to companies in their specific sector. Leave the variable as a literal placeholder —

don't fill it in."Claude will return copy like:

{{firstName}},

One of our close partners runs a targeted process specifically for

{{sector_relevantfundingtype}} companies — typically getting answers in 2–3 weeks

rather than the 3–4 months a traditional path takes.

Worth a 15-minute call to see if there's fit?

[Signature]The key discipline here: write copy where the variable makes the sentence more specific, not where it becomes awkward if it reads as a generic placeholder. The sentence should still make sense at a high level even before the variable is filled.

For your subject lines, you can do the same thing. One subject line variant uses a per-row variable like {{subject_line}} which gets populated with something like "working capital line?" or "equipment financing?" depending on what the company does.

Step 2: Set Up Your Google Sheet

Your Google Sheet is the hub. It needs at minimum these columns to start:

| Column | Purpose |

|---|---|

| first_name | Used in {{firstName}} variable |

| last_name | Contact last name |

| Primary send address | |

| company_name | Company display name |

| website | Used for scraping |

| title | Contact job title |

| city | Optional personalization |

| state | Optional personalization |

| industry | Fallback when scraping fails |

You'll add enrichment columns as you go:

| Column | Purpose |

|---|---|

| site_content | Raw scraped text — internal only, never imported to sending platform |

| sector_relevantfundingtype | Classified noun phrase — the final variable value |

| subject_line | Per-row generated subject line variable |

Step 3: Scrape the Websites

Tell Claude something like:

"I have a Google Sheet with a website column. I want to scrape each company's

website using the Cloudflare Browser Rendering API — try the /markdown endpoint

first on the homepage, and fall back to a plain HTTP GET if Cloudflare fails or

times out. Write the scraped text into a column called site_content in the same

Sheet. Use async workers so it runs reasonably fast across 1,000+ rows. Cache

results by domain so re-running doesn't re-scrape sites we've already processed.

My Cloudflare API token and account ID are in environment variables."Claude will produce a Python script — something like fill_site_content.py — that reads your Sheet via the Google Sheets API, iterates through rows with a populated website column and empty site_content column, calls Cloudflare's /markdown endpoint for each domain, falls back to a plain HTTP GET if Cloudflare fails, writes the scraped text back to site_content, and caches results in a local JSON file keyed by domain so you can restart safely.

Run the script and watch it fill in the site_content column in your live Sheet. Expect roughly 80–90% fill rates on a typical B2B list — some company sites simply don't have enough useful text.

Step 4: Classify the Scraped Text Into a Variable Value

Raw scraped text is not ready for email copy. You need to distill it into a clean 2–4 word noun phrase that describes the company's business type at a level of specificity that sounds like a human researched it.

Tell Claude something like:

"I want to classify the scraped site_content for each row into a short noun phrase —

2 to 4 words — describing what type of business this company is. Call the output

column sector_relevantfundingtype. It should be like 'automotive dealerships' or

'commercial real estate brokerage' or 'healthcare staffing' — specific enough to

feel personalized, but generic enough that it works for a company in that space.

If site_content is thin, fall back to the industry column from the source data.

If both are thin, return blank. Never make the phrase sound promotional. Write a

Python script that calls GPT-4.1-mini with a classification system prompt, processes

the Sheet row by row, and writes the results back. Cache by domain."The system prompt Claude writes for the GPT call is the critical piece. It instructs the model to be specific — not "financial services" but "independent insurance agencies." Not "technology" but "cloud infrastructure software." The specificity is what makes the variable read naturally in copy.

Step 5: Handle the Rows That Didn't Fill

Expect 10–20% of rows to come back blank — either because the website was down, the homepage had no useful text, or the classification model didn't have enough signal. This is normal. You handle it with a recovery pass.

Tell Claude something like:

"Some rows in my sector_relevantfundingtype column are still blank after the

first pass. For those rows, I want to try scraping fallback pages from the same

domain — About, Services, Solutions, Industries in that priority order. Try each

page until one has useful content. If any page has content, re-run classification.

If classification still fails after 8 attempts (try with progressively relaxed

prompting), fall back to the raw industry column value. If that's also blank,

write 'commercial operators' as a safe catch-all. No row should be left blank."Claude will produce a recovery script. The goal is zero blank rows by the time you're done. Every contact gets a value — even if the last-resort fallback is a generic phrase.

Step 6: Clean Spam-Trigger Words Out of Your Variable Values

This is a step most people skip — and it causes deliverability problems. GPT will sometimes produce noun phrases that contain words email spam filters flag: "insurance," "loans," "financial," "mortgage," "credit," and others. These words, even inside a variable that's only a few words long, can affect inbox placement.

Tell Claude something like:

"Scan every value in the sector_relevantfundingtype column against this list of

banned words: [list your banned words]. For any value containing a banned word,

rewrite the phrase to avoid it while preserving the meaning. For example,

'insurance agencies' becomes 'coverage groups.' 'Mortgage brokers' becomes

'residential lending groups.' Write a script that applies these rewrites and

updates the Sheet in place."Claude will produce a cleanup script that reads a substitution map, applies rewrites, and flags any value it couldn't fix automatically for manual review. Apply the same logic to subject line values before import.

Step 7: Generate Per-Row Subject Lines

If you want a per-row subject line variable, the flow is the same as sector classification — but optimized for a different output format.

Tell Claude something like:

"Using the site_content, sector_relevantfundingtype, and industry columns as

context, generate a 2–3 word phrase with a question mark that could function as

a subject line for a cold email. It should be a casual, low-friction phrase like

'working capital line?' or 'equipment financing?' — not a full sentence. Write

results to a subject_line column. Apply the same spam-word cleanup pass afterward."This gives you a subject line variant that's dynamically generated per company, making the email feel less like a blast.

Step 8: Prepare Your Launch Data

Before importing to your sending platform, you clean the Sheet down to only the columns you need. The site_content column — the raw scraped text — never leaves your Sheet.

Tell Claude something like:

"Create a clean export from my Sheet with only these columns: first_name,

last_name, email, company_name, website, title, city, state,

sector_relevantfundingtype, subject_line. Export it to a CSV. Verify there are

no blank emails, no duplicate emails, and that sector_relevantfundingtype has

no blank values."Claude will produce a script that creates your clean export and validates it.

Step 9: Import to Instantly and Map Variables

When you import your CSV into Instantly, the column headers become variable names. Instantly normalizes them to lowercase with underscores. So a column called sector_relevantfundingtype becomes available in your copy as {{sector_relevantfundingtype}}.

In your copy sequence inside Instantly, your email body already has the placeholder in it from Step 1. When Instantly sends the email, it substitutes the per-row value at send time.

This means contact A at a healthcare staffing company gets: "...runs a targeted process for healthcare staffing companies..." and contact B at an automotive dealership group gets: "...runs a targeted process for automotive dealership groups..." Same copy. Different company context. Neither knows the other exists.

Step 10: QA Before You Send

Before activating the campaign, do a final sanity check. Tell Claude something like:

"Read through 20 random rows from my launch CSV and tell me: (1) does the

sector_relevantfundingtype value read naturally if inserted into this sentence:

'A close partner runs a targeted process for [value] companies.' (2) Does the

subject_line value look like a natural casual email subject? (3) Are there any

values that look like they contain spam-trigger words? Flag anything that looks

wrong."Claude will scan the sample and flag any values that would read awkwardly in copy or contain problematic language — before a single email is sent.

The Full Picture

Here's the complete flow from raw contact list to personalized send:

- 1Raw lead list (CSV or Sheet) — first name, email, website, company

- 2Website scraping — Cloudflare /markdown → HTTP fallback → site_content column

- 3GPT classification — site_content → sector noun phrase → sector column

- 4Recovery pass — blank rows → fallback pages → re-classify → industry fallback

- 5Spam-word cleanup — rewrite banned tokens in sector and subject values

- 6Subject line generation — site_content + sector → 2–3 word phrase → subject column

- 7Launch export — drop internal columns, validate, export clean CSV

- 8Instantly import — CSV → custom variables per lead

- 9Copy sequence — {{firstName}}, {{sector_relevantfundingtype}}, {{subject_line}} resolve at send time

- 10Send

The entire enrichment pipeline — Steps 2 through 7 — is Python scripts that Claude writes for you. You run them, they write results back to your Sheet, and you move to the next step. Claude handles the code. You handle the decisions: which variable makes copy better, which fallback value is acceptable, which rows look wrong in QA.

A Note on What Claude Is Doing Here

Every script in this workflow was produced by describing the problem to Claude in plain English. You don't need to know how to write async Python, how to call the Cloudflare API, or how to structure a GPT classification prompt. What you need is a clear picture of what you want the output to look like — and the ability to review Claude's work and tell it when something is off.

The pattern is consistent across every step:

- Describe the input (what's in the Sheet)

- Describe the output (what column you want filled, what format the value should be in)

- Describe the edge cases (what to do when it fails, what words to avoid, what the fallback is)

- Let Claude produce the script

- Run it, review the output, and tell Claude what to fix

That's the entire system. The personalization isn't coming from you manually researching 3,000 companies. It's coming from a pipeline you built once, with Claude's help, that does the research for you.

Need cold email volume?

Done-for-you mailboxes for outbound

InfraSuite is built for teams that rely on cold email as a core revenue channel and need stable, high-performing Outlook mailboxes. You subscribe to a proven Microsoft-based sending environment that's already configured for cold outreach — provisioning, DNS, mailbox setup, and deliverability hygiene handled for you. A completely hands-free and automated solution so you can focus on campaigns, clients, and revenue instead of infrastructure risk.

- Stable inbox placement across Outlook and Google

- Fewer resets, fewer domain swaps

- Capacity ready when clients sign

- Calm, competent support when something looks off